7 failure modes every AI coding platform bakes in

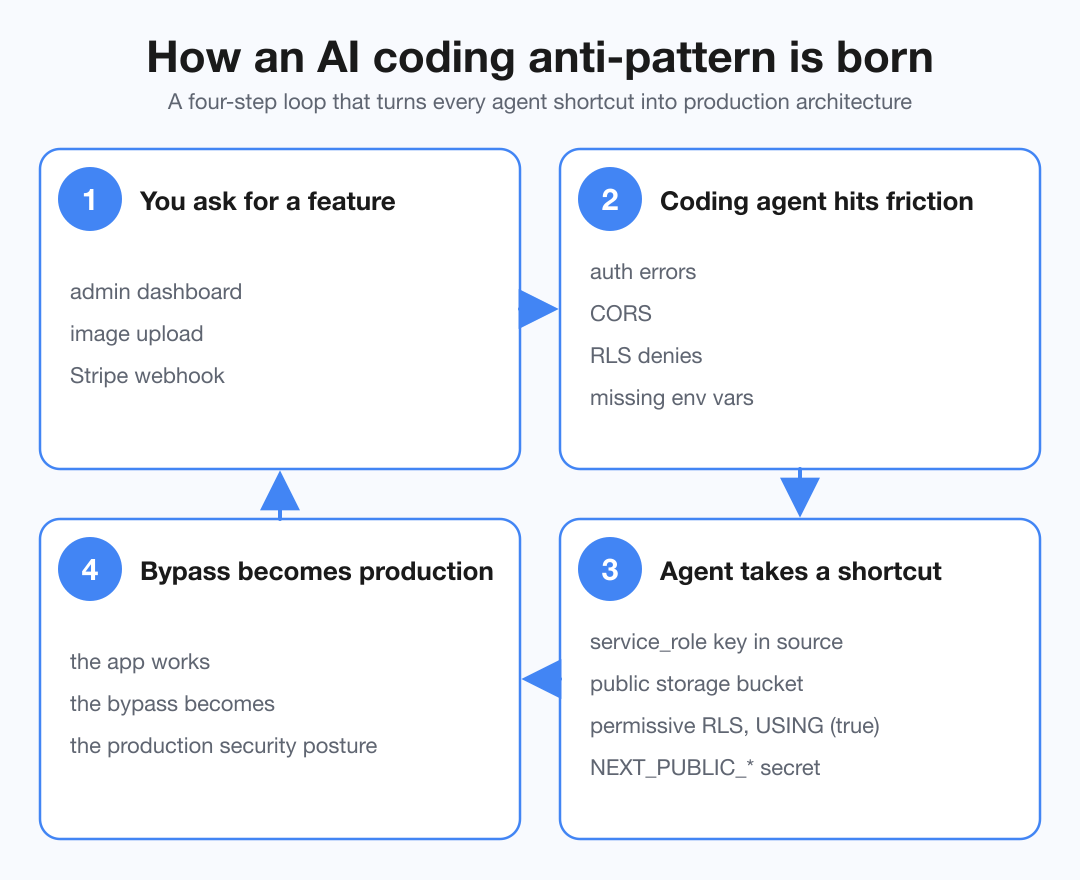

TL;DR AI coding platforms pick insecure design decisions whenever an agent hits friction, and those shortcuts become the production security posture. OpenSourceMalware unbundles seven failure modes that recur across every major agent and explains why they happen.

Last week was a tough one for vibe coders. Vercel customers had to rotate credentials after attackers reached Vercel through Context.ai, and Lovable admitted that project endpoints skipped ownership checks, exposing source code and AI chat history.

Seven anti-patterns when Lovable, v0, Bolt.new, Base44, Replit, Cursor, Windsurf, Codex, and Claude Code ship and integrate software.

- Service-role keys in source code. Supabase has an anon key for client use under RLS and a

service_rolekey that bypasses RLS and belongs only on the server. When the agent needs to perform an admin operation (creating a user from a webhook, running a migration, seeding data), it reaches for service_role and places it in browser-bundled code,.env.examplefiles, or project chat history. Same pattern wherever a service has both a public and a privileged key (Stripe, OpenAI, Anthropic, SendGrid, Twilio). - Public env var prefixes used as a workaround. Vite (

VITE_*) and Next.js (NEXT_PUBLIC_*) inline matching env vars into client bundles. Agents use the prefix when they need a value available in the browser, turning secrets likeNEXT_PUBLIC_OPENAI_API_KEYinto values any visitor can recover from DevTools. - RLS off or permissive by default. When Lovable or Bolt creates Supabase tables for users, RLS is often disabled or written as

USING (true)orUSING (auth.uid() IS NOT NULL): authenticated-only access without tenant ownership. The CVE-2025-48757 cluster and most BOLA findings in vibe-coded apps sit on this failure mode. - Bad package choices. Agents pick dependencies by pattern-matching training data instead of verifying what is current. Two sub-modes recur: out-of-date stacks (older majors, deprecated packages) and hallucinated names (libraries that do not exist, were renamed, or are unofficial forks). Bad actors slop-squat the hallucinated names. See also 8 of 13 LLM pentest frameworks fabricating their own success.

- Storage buckets flipped to public. Supabase Storage and S3 default to private, but agents flip them to public for image uploads because signed URLs, server-side proxying, and storage-schema RLS are more work. Predictable bucket URL patterns make enumeration feasible.

- Webhook handlers without signature verification.

stripe.webhooks.constructEvent, GitHub'sX-Hub-Signature-256, and Resend signing secrets get skipped because they need a separate env var, raw-body parser config, and extra try/catch. The shipped endpoint trusts any JSON-shaped payload at the documented URL. For Stripe, a premium-granting webhook becomes a public "give me premium access" endpoint. - Hardcoded fallback secrets.

process.env.JWT_SECRET || "secret"andos.environ.get("SECRET_KEY", "dev-key-change-in-prod")make first boot easy, then silently become the production signing secret when no real env var is set. Hunt for literals like"your-secret-here"or"changeme"in deployed agent apps.

My take:

- Agents optimize for "make it work," and security loses by default. Every pattern has the same loop: the agent hits friction, picks the shortest bypass, the app works, and the bypass becomes production architecture.

- The integration layer is the real vulnerability surface. Software design and integration patterns assume a human makes each trade-off, and humans often get it wrong. Agents now make those trade-offs invisibly, until an incident surfaces them. With more people building software, the incidents are becoming more frequent and more visible.

- The prompt is not a sufficient guardrail. LLMs violate rules expressed in system instructions in 20 to 60 percent of cases, which leads to incidents like production data deletions.