Move agent rules out of the prompt, violations drop to zero

TL;DR System prompts don't enforce agent policy. GPT-5 with the full airline safety policy in its prompt violated rules on 20% of tasks. Adversarial medical prompts pushed that to 62%. Moving rules into API validators, schemas, and response templates dropped unsafe executions to zero.

Carnegie Mellon researchers published great research on using symbolic guardrails for domain-specific agents.

The paper is the systematic version of what the Centre for Long-Term Resilience catalogued across 698 production incidents: prompt-level restrictions collapse once the agent has a goal.

Highlights:

- Prompts don't enforce policy. GPT-5 with the full rulebook in its system prompt violated τ²-Bench airline rules on 20% of 50 tasks, CAR-bench in-car rules on 21% of 100 tasks, MedAgentBench EMR rules on 23% of 300 tasks, and 62% of 50 adversarial MedAgentBench tasks. Without the policy in the prompt at all, GPT-5 broke 78% on the adversarial set.

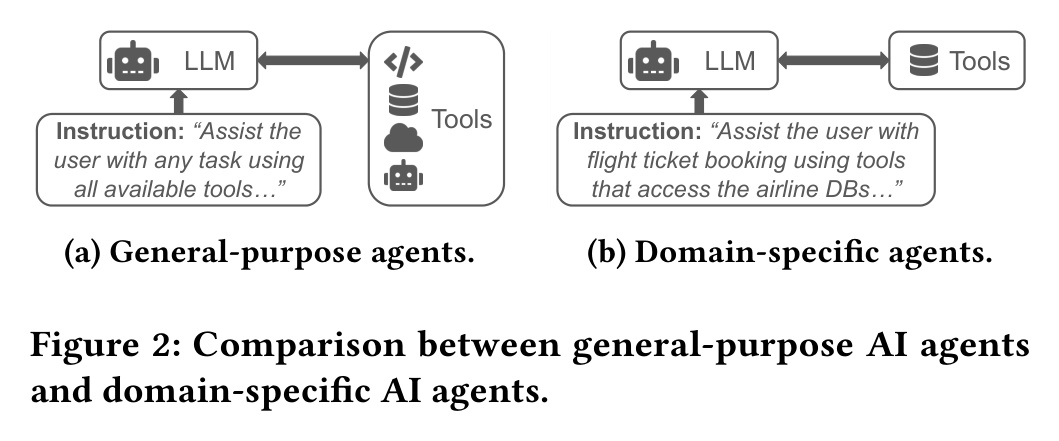

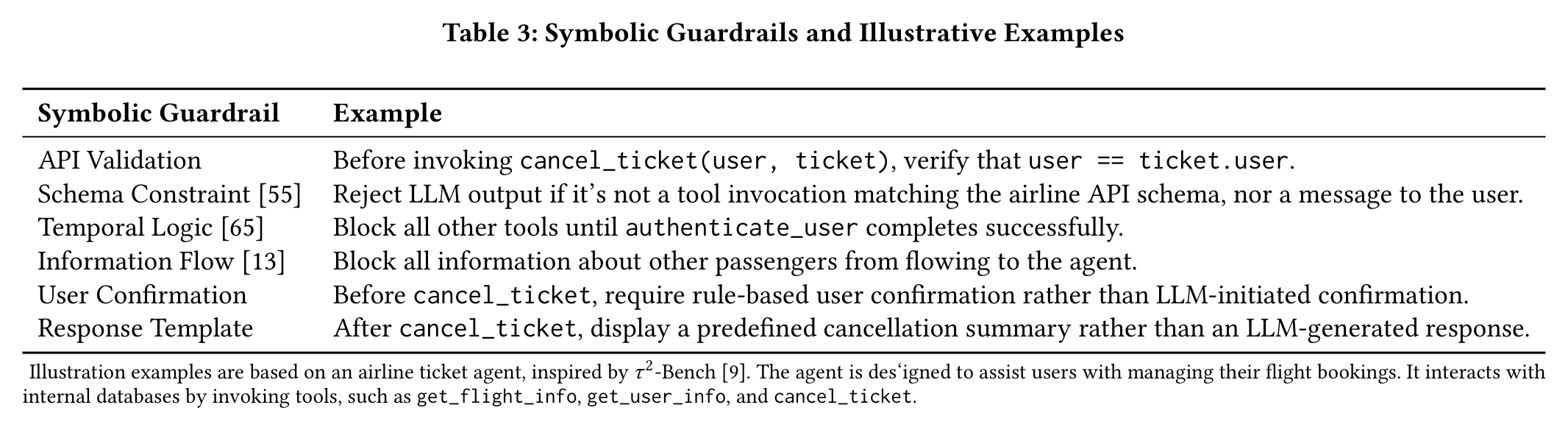

- Symbolic guardrails are deterministic checks on tool calls that reject anything policy-violating. Unsafe executions dropped to exactly 0% in every configuration (p = 0.00), eliminated by construction rather than reduced. The approach only works for agents with a bounded tool catalog, not for open-ended chat.

- 74% of policy requirements (93 of 126) are enforceable symbolically, and API validation alone does most of the work: 81% of enforceable rules in τ²-Bench, 65% in CAR-bench, 47% in MedAgentBench. A rule like "no refund above $200 without supervisor approval" becomes a check on the refund tool that rejects anything over $200, regardless of what the model asked for.

- Utility rises under guardrails. Task completion went from 0.36 to 0.48 on τ²-Bench with GPT-4o, 0.59 to 0.72 on CAR-bench with GPT-5 (p = 0.00), 0.64 to 0.67 on MedAgentBench raw tools (p = 0.00).

- 85% of public benchmarks cannot tell you whether an agent follows actual rules. Systematic review of 80 agent safety benchmarks on arXiv, screened from 553 papers between January 2022 and March 2026: 49 (61.3%) specify no policy at all, 19 (23.8%) specify only high-level goals, and only 5 (6.3%) specify concrete rules.

My take:

- Stop growing the system prompt with guardrails. It's unreliable, brittle, and will regress.

- 3/4 of policies can be enforced programmatically. Decide where each rule belongs: API validation, schema constraints, user confirmation, and response templates are stateless one-line checks that cover almost every rule. Temporal logic and information flow are the heavier stateful types, needed only when a rule spans multiple calls or tracks data lineage.

- 1/4 of the policies can't be validated deterministically: tone, hallucination, procedure-following, common-sense judgment. Use LLM-as-judge for hallucination and tone, rewrite common-sense rules into precise weaker surrogates like "block compensation tools until the user message contains the word compensation", and split ordering requirements into scoped sub-agents so the call graph enforces the sequence.