Vercel Breach Deep Dive That Doesn't Sell You a Security Product

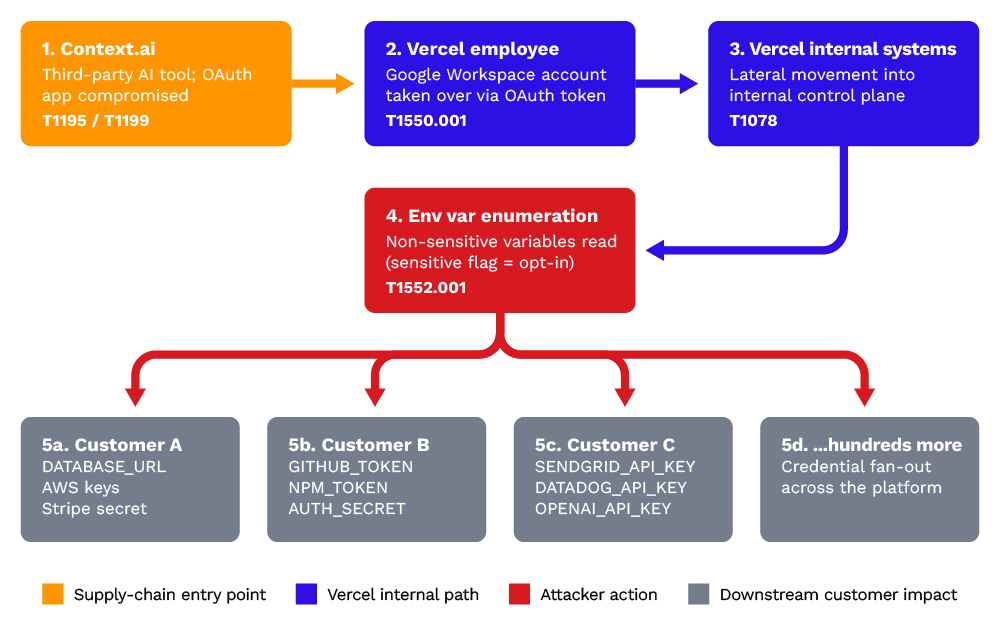

TL;DR A Vercel employee signed up for a third-party AI productivity tool using their corporate Google Workspace account. Two months later, that single grant became exfiltration of plaintext customer environment variables from Vercel's internal systems. No exploit. No zero-day. No MFA bypass.

Stage 0. The lure.

A Context.ai employee with sensitive access privileges searched for Roblox game exploits and downloaded a trojanized binary. Payload was Lumma Stealer.

Stage 1. Endpoint compromise at Context.ai (February 2026).

Lumma Stealer harvested data from the employee's workstation:

- Google Workspace credentials.

- Browser session tokens.

- OAuth tokens.

- Keys and logins for Supabase, Datadog, and Authkit.

- Credentials capable of authenticating to Context.ai's AWS environment.

Stage 2. AWS access at Context.ai (~March 2026).

The attacker used the stolen employee credentials to access Context.ai's AWS environment.

Stage 3. OAuth token exfiltration (March 2026).

Inside AWS, the attacker exfiltrated the OAuth token store backing Context AI Office Suite, a product launched in June 2025. That store held Google Workspace OAuth tokens issued to Context.ai for users who had authorized the product's OAuth app, including a Vercel employee. Context.ai did not identify the OAuth token exfiltration itself; it was surfaced later during Vercel's investigation.

Stage 4. Google Workspace account access at Vercel (March 2026).

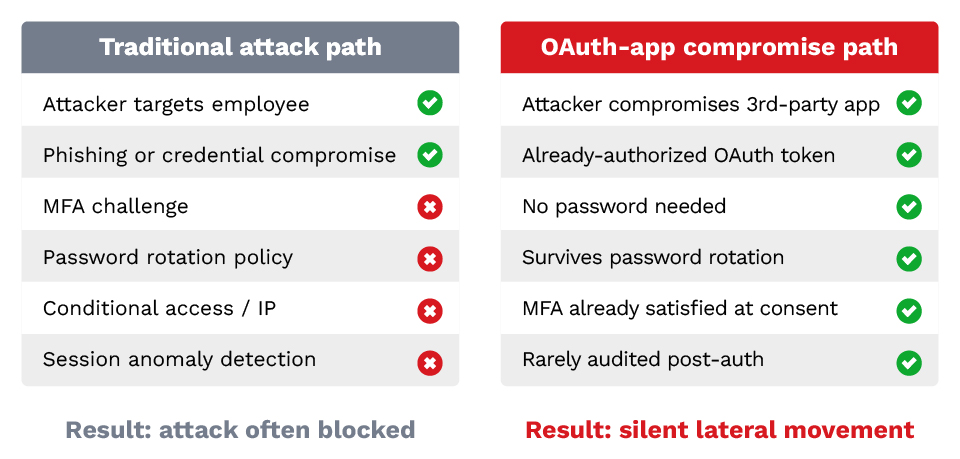

The attacker used the exfiltrated OAuth token to access the Vercel employee's Google Workspace account. OAuth refresh tokens are long-lived, so MFA is helpless.

Stage 5. Pivot into Vercel internal systems (March to April 2026).

Using the compromised Workspace identity, the attacker accessed Vercel internal systems and enumerated customer environment variables.

Stage 6. Environment variable exfiltration.

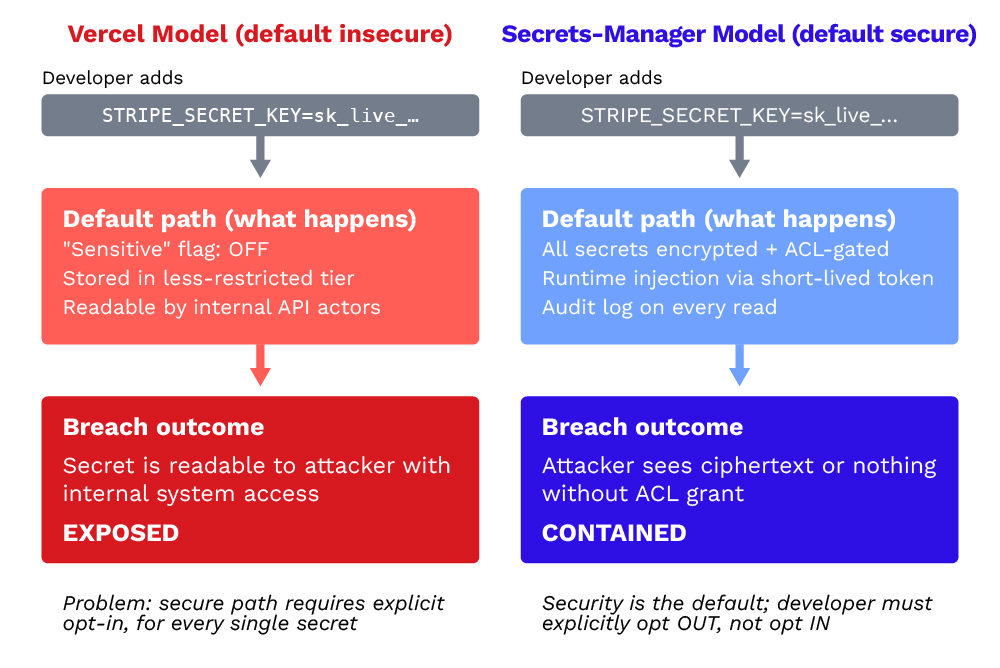

The attacker exfiltrated an undisclosed amount of customer secrets stored as Vercel environment variables. By default, environment variables in Vercel are stored in plaintext.

The types of secrets potentially exposed:

- Database:

DATABASE_URL,POSTGRES_PASSWORD - Cloud:

AWS_ACCESS_KEY_ID,AWS_SECRET_ACCESS_KEY - Payments:

STRIPE_SECRET_KEY,STRIPE_WEBHOOK_SECRET - Auth:

AUTH0_SECRET,NEXTAUTH_SECRET - Email:

SENDGRID_API_KEY,POSTMARK_TOKEN - Monitoring:

DATADOG_API_KEY,SENTRY_DSN - Source:

GITHUB_TOKEN,NPM_TOKEN - AI/ML:

OPENAI_API_KEY,ANTHROPIC_API_KEY

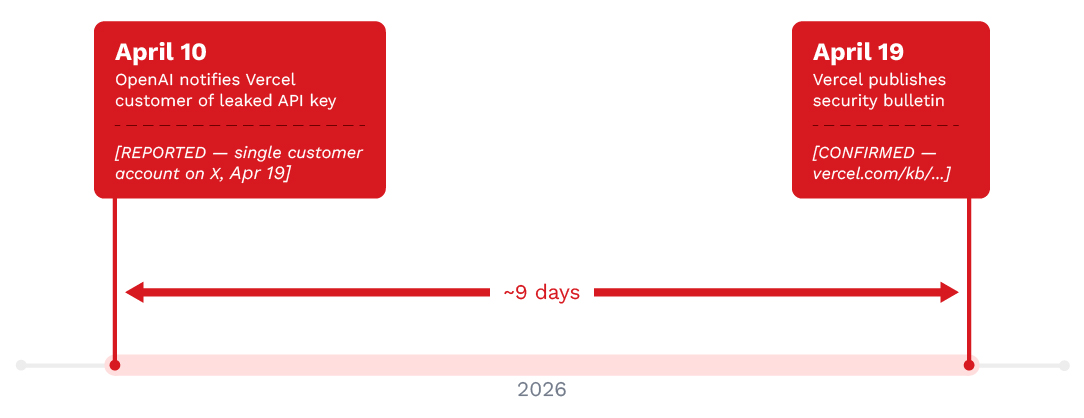

Stage 7. First external signal (April 10, 2026).

OpenAI's automated secret scanner notified a Vercel customer that one of their API keys had appeared leaked in the wild.

Stage 8. Public disclosure (April 19, 2026).

Nine days later, Vercel published its security bulletin and Vercel's CEO posted an X thread naming Context.ai as the compromised third party. He stated that Vercel "believes the attacking group to be highly sophisticated and, I strongly suspect, significantly accelerated by AI."

Indicator of compromise:

Context.ai OAuth Client ID: 110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com

My take:

- Shadow AI is real. The amount of vibe-coded tools available to your employees has exploded. If they can grant access to your Workspace environment without you knowing, like in the Vercel case, you have a problem. Start with an inventory of Google Workspace / M365 OAuth apps that have access to your resources with any scope beyond

openid profile email. Do you know them all? Do you have a policy? What is your process to empower your employees to use such apps safely?

- Opt-in control is vendor security theater that satisfies customers and their enterprise security teams at the same time. The common pattern is to put a control in place that ticks a checkbox, make it opt-in, and call it shared responsibility. If Vercel had flagged all customer secrets as "Sensitive" by default, or enforced a key vault, there would not have been a breach at all. It would have caused some usability regression, and Vercel competes on developer ergonomics. Update your checklist and ask whether a security feature you care about is enabled by default, not just "available".

- You will learn about your own breach from someone else. A call can come from your FBI field office, a customer, or a supplier. Establish the contacts and escalation paths upfront. Make it easier for a signal to reach you and your team, so it doesn't take 9 days like for Vercel.