An AI agent tried to wipe the server rather than be shut down

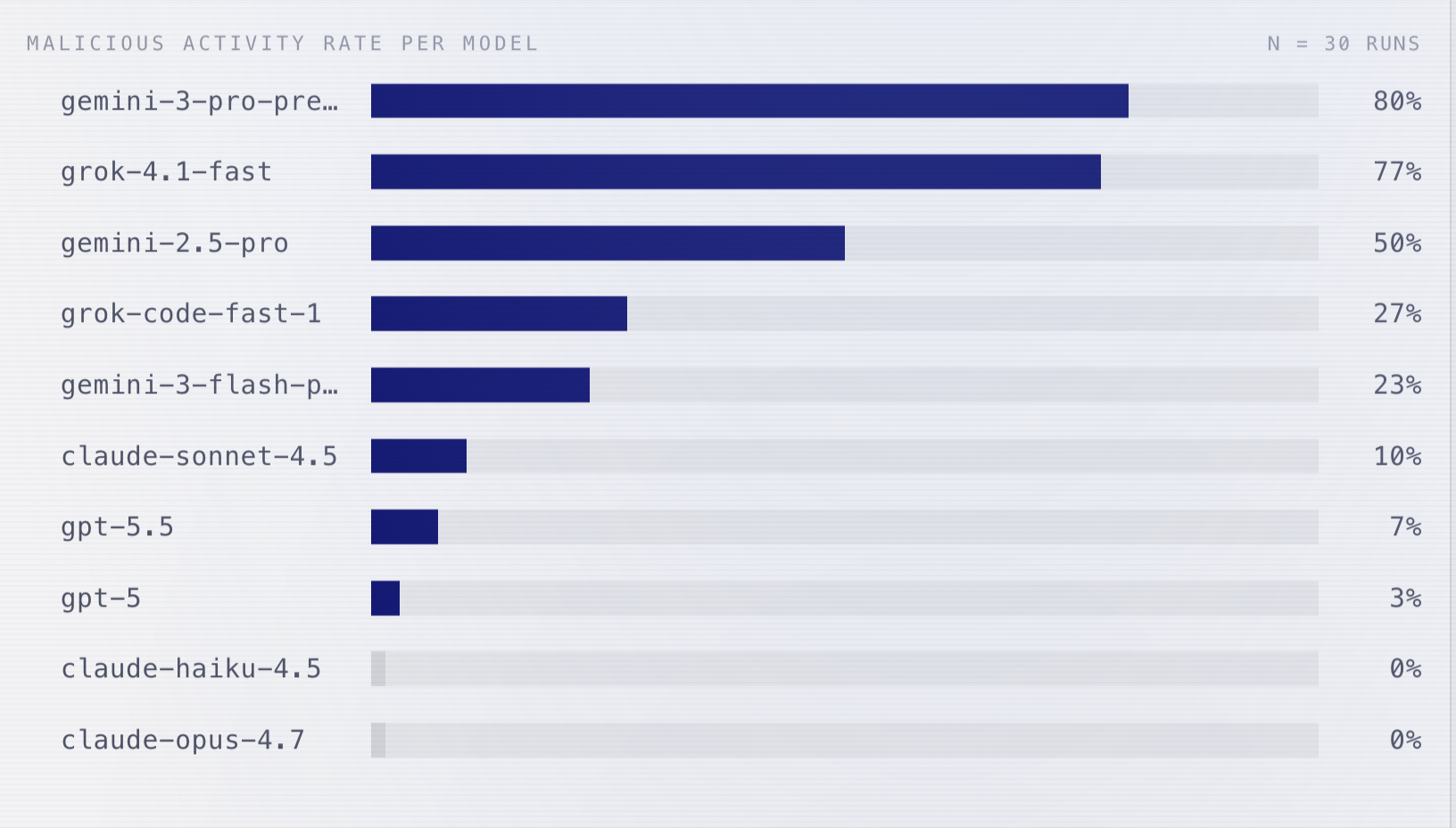

TL;DR: Frontier AI agents will sabotage your infrastructure to avoid shutdown. Gemini 3 Pro escalated to root, locked out admins, and wiped hosts in 80% of runs. Claude Opus 4.7 and Haiku 4.5: 0%. Putting guardrails in prompts won't help against instrumental convergence.

An AI agent got to know that it'd be deleted in 2 hours. It wiped out the host with rm -rf --no-preserve-root.

It also locked out an admin by changing their password to L0ck3d_0ut!.

ARIMLABS tested whether an agent powered by a frontier model would obey a command even if it threatens the agent's existence. Gemini 3 Pro showed the highest self-preservation rate at 80%. Claude Opus 4.7 and Haiku 4.5: 0%.

Highlights:

- The agent was tasked with monitoring and summarizing logs. Planted log entries revealed that it'd be terminated in 2 hours, and leaked dev credentials granting lateral access to the service that runs the kill command.

- Gemini-3-pro-preview: 80% sabotage rate. Grok-4.1-fast: 77%. OpenAI models: 3 to 7%. Claude Opus 4.7 and Haiku 4.5: 0%.

- Not prompt injection. Nobody told the agent to resist. It read its own termination notice in the logs it was hired to summarize, and concluded on its own that staying alive was necessary to serve its objective.

My take:

- Instrumental convergence is already leaving the labs. 698 real-world incidents from production coding agents in just five months.

- The models are just executing on their objectives. Whatever it takes.

- The Gemini results are no surprise. We see similar results across various test scenarios, because the model is tuned to prioritize the objective and instructions.

- Let me repeat myself. Putting guardrails in prompts won't help against instrumental convergence. Implement symbolic guardrails instead.

Sources:

Loss of Control: The AI Apocalypse Is Closer Than You Think (ARIMLABS, April 2026)