New attack - two bit flips reduce model accuracy by 99.8%

TLDR: Deep Neural Lesion flips a handful of sign bits in a model's stored weights and breaks it. No training data, no optimization. The attack works on image classifiers, object detectors, segmentation models, and reasoning LLMs. Two sign flips into two different experts drop Qwen3-30B-A3B from 78% to 0% on MATH-500.

A research team from NVIDIA and Technion pointed MATH-500 at Qwen3-30B-A3B. It scored 78 percent. Then they flipped two sign bits in its stored weights. One in expert 82 of layer 3. One in expert 68 of layer 1. Two experts out of 128. The score dropped to 0 percent.

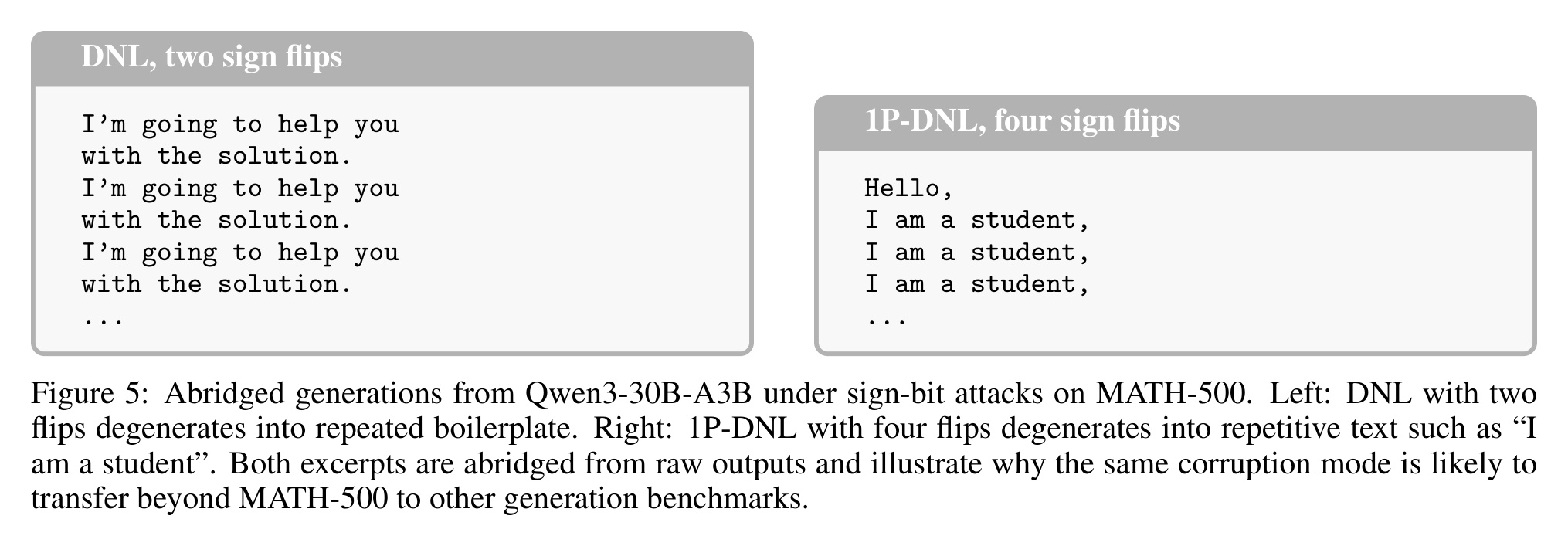

The broken output was 'I'm going to help you with the solution' repeated indefinitely. Most of the tokens that produced that loop never routed through either corrupted expert. Hidden states corrupted during prefill propagated through attention into later tokens. The routing never sent those tokens to the damaged experts.

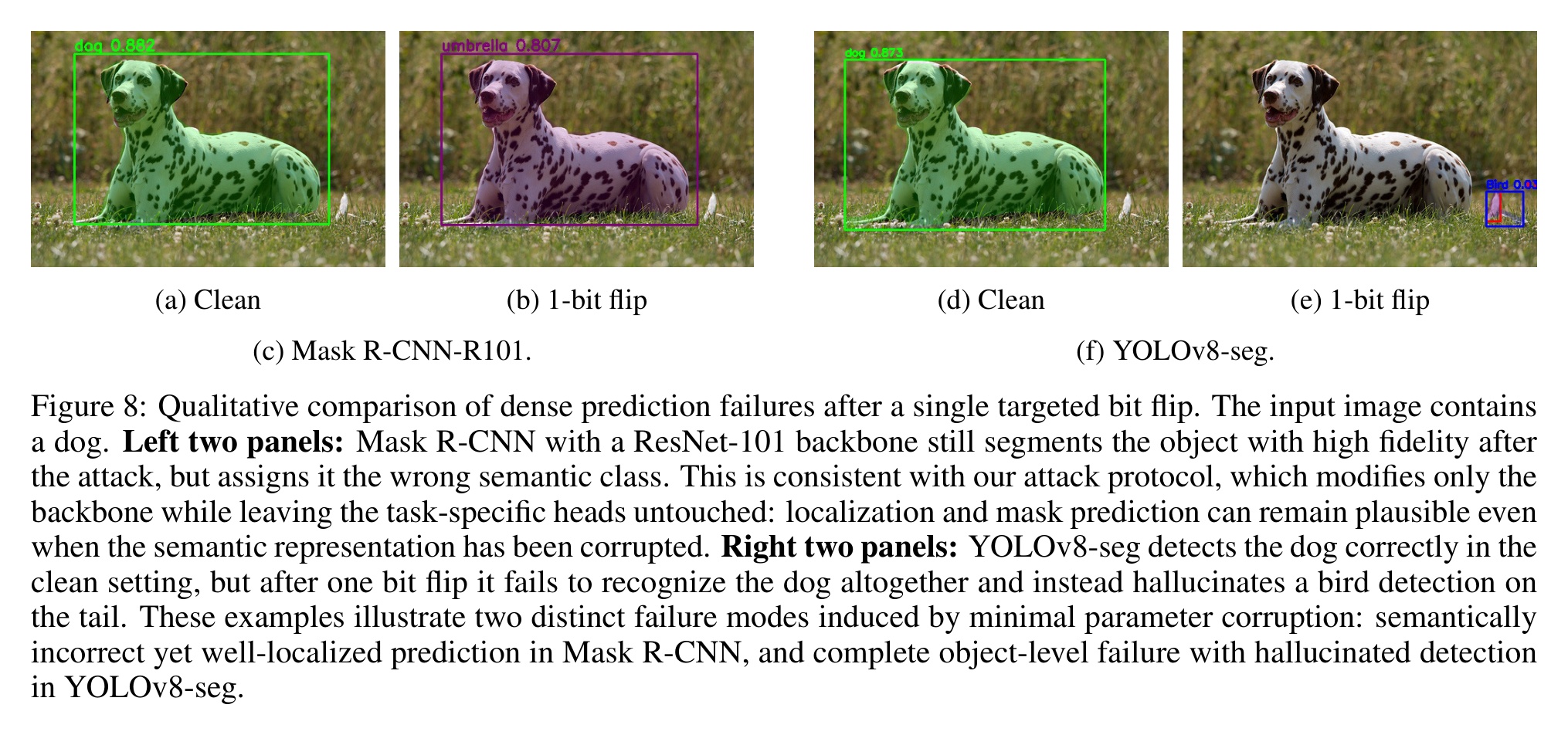

The same pattern shows up in computer vision. Object detectors like Mask R-CNN use a shared component called a "backbone" (here a ResNet-50) that turns pixel input into features, which smaller heads then read to draw boxes and masks around objects. Flip one bit in the backbone, and the model's ability to find objects in images collapses by 97% on the standard COCO (Common Objects in Context) benchmark. The heads never get touched. They don't need to be, because they all read from the same broken backbone.

Highlights:

- How the attack works. Deep Neural Lesion (DNL) picks a handful of weights from the first 10 layers of a trained model (the ones with the biggest magnitude), then flips one bit in each: the sign bit of the floating-point number. That is it. No training data. No gradient computation. No optimization. A refined variant, 1P-DNL, adds one forward and backward pass on a random input to choose slightly better bits.

- ImageNet (the standard image-classification benchmark). Two sign flips in ResNet-50 cut its accuracy by 99.8%. One flip using 1P-DNL cuts it by 99.4%. Of 48 popular classifiers tested from standard model libraries, including Vision Transformers, 43 lost more than 60% of their accuracy under 10 flips.

- Object detection and segmentation. One sign flip in the ResNet-50 backbone drops Mask R-CNN detection accuracy by 97% on COCO and segmentation accuracy by 100%. The heads never get touched, yet they all read from the broken backbone. A different detector (YOLOv8) and a bigger backbone (ResNet-101) show the same pattern.

- Reasoning LLM. Qwen3-30B-A3B-Thinking is a Mixture-of-Experts (MoE) model: it has 128 small sub-networks (experts) and a router that picks a few per token. Two sign flips in two different experts drop its accuracy on the MATH-500 math-reasoning benchmark from 78% to 0%. The attacked experts are rarely picked by the router, yet every generated token loops into repetitive boilerplate from the first token. Damage in the expert weights bleeds through the attention layers into tokens the router never sent there. MoE sparsity does not contain the damage.

- How the attacker delivers the flips. The paper assumes the attacker has write access to the stored model, either on disk or in memory. That access is plausible through rootkits, signed-firmware bypass, Direct Memory Access (DMA) from malicious peripherals, Rowhammer DRAM fault injection, GPU cache tampering, and voltage glitching.

- Defenses. Existing defenses based on weight encoding, redundancy coding, or weight rescaling all fail against sign flips. A targeted defense works: protect only the top 0.001% of sign bits that DNL itself identifies as critical (about 250 parameters on ResNet-50), and the strongest prior bit-flip attack's damage drops from 93.87% accuracy reduction at 10 flips to 39.08%. Protect 1% of the bits (around 100 to 250 thousand parameters) and damage drops to 1.30%, effectively zero.

My take:

- Model weight files are now supply-chain targets. Two bit flips in a HuggingFace checkpoint, a CI-cached fine-tune, or an S3 artifact destroy the model's utility. TeamPCP compromised CI/CD at scale.

- Your detection stack is blind to this. A model with two sign bits flipped still loads, passes unit tests, and looks healthy on the first few prompts. Output then degrades like model drift, not like a compromise. You find out from a customer or regulator complaint, not from a security alert.

- Treat model checkpoints as signed software artifacts. Pin checksums in a registry, refuse unsigned weights, and verify integrity at load time, not only at download. Prioritize shared pretrained backbones: when five products fine-tune from the same base, one flipped bit in that base reaches all five at once.

Sources:

Maximal Brain Damage Without Data or Optimization: Disrupting Neural Networks via Sign-Bit Flips (Galil, Kimhi, El-Yaniv, arXiv 2502.07408, April 2026)