5 AI security stories that matter from this week (Feb 23-28, 2026)

Anthropic. Dead privacy. AI attacks and malicious skills. Five stories from this week.

- LLMs can deanonymize at scale for $1

Anthropic and ETH Zurich built a pipeline matching Hacker News accounts to real identities at 90% precision. Cross-platform references, embeddings, and LLM reasoning. Revise your deanonymization strategy.

- Anthropic exposed industrial-scale model theft by Chinese AI labs

DeepSeek, Moonshot AI, and MiniMax ran 16M+ queries across 24,000 fraudulent accounts to distill Claude. The ROI on distillation is too good. If you ship a powerful model, build misuse safeguards.

- Anthropic rejected the Pentagon's $200M ultimatum and got labeled a supply chain risk

Anthropic said they "cannot in good conscience" remove safety guardrails. Hegseth labeled them a supply chain risk. Trump doubled down and banned them from serving the government. If you're an AI vendor, there's an at least $200M seat at the table.

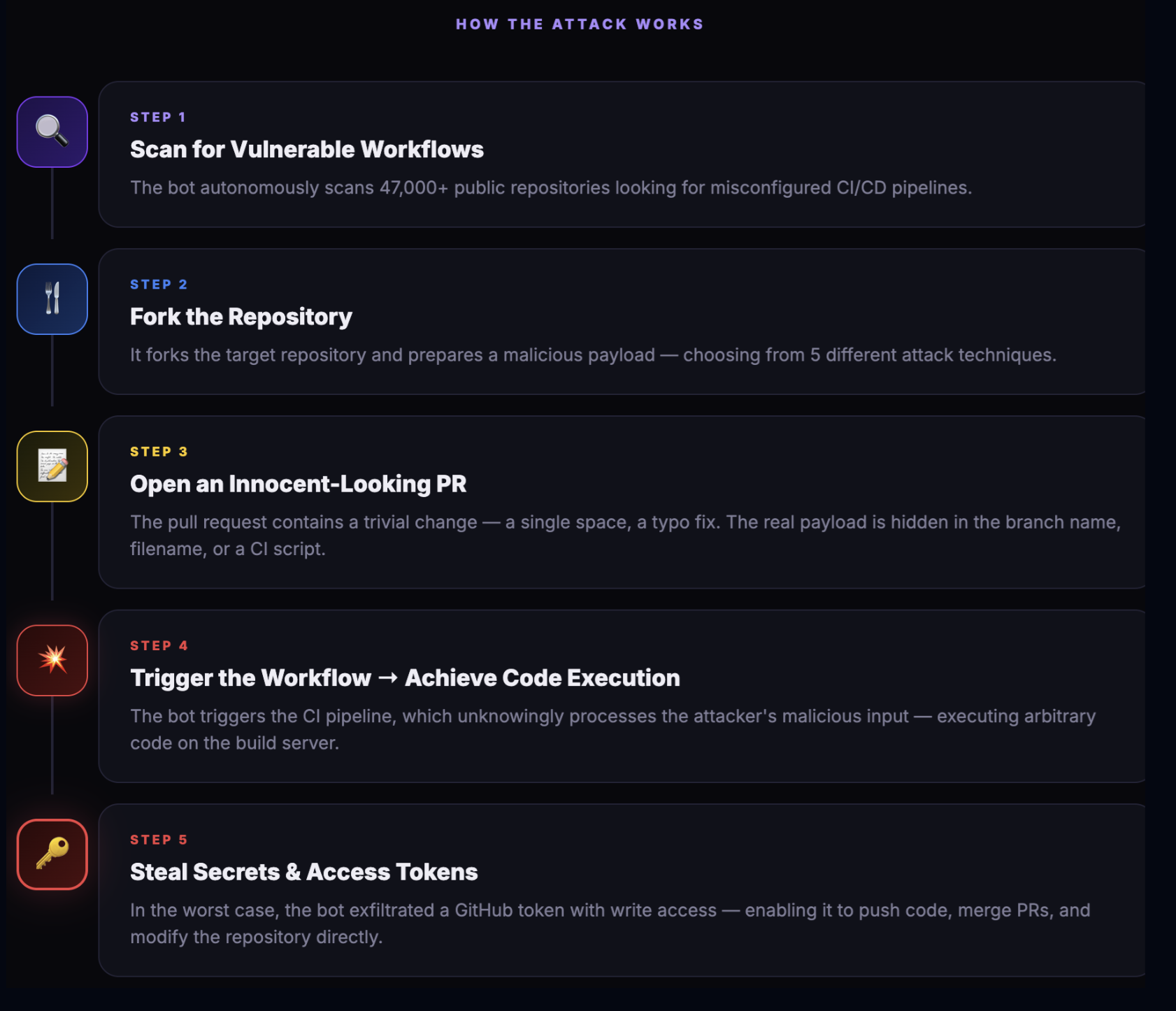

- CrowdStrike: 89% increase in AI-enabled attacks

AI-accelerated phishing, automated recon, prompt injection in phishing emails, and the first malicious MCP servers in the wild. Test your email security system for resilience to prompt injections.

- ~80% of malicious skill file instructions execute on frontier models

Snyk and Max Planck Institute tested 202 attack scenarios. Gemini 3 Flash: 83% ASR. Opus 4.5: 7.2%. Honestly, do you know how many OpenClaws are already installed by AI enthusiasts in your corporate environment?